Summary

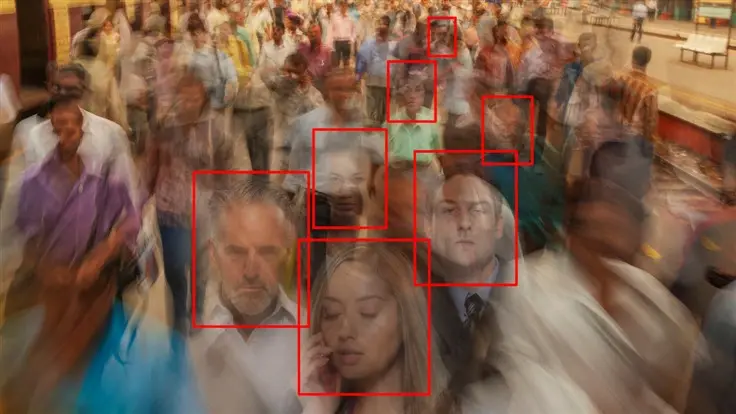

- Detroit woman wrongly arrested for carjacking and robbery due to facial recognition technology error.

- Porsche Woodruff, 8 months pregnant, mistakenly identified as culprit based on outdated 2015 mug shot.

- Surveillance footage did not match the identification, victim wrongly identified Woodruff from lineup based on the 2015 outdated photo.

- Woodruff arrested, detained for 11 hours, charges later dismissed; she files lawsuit against Detroit.

- Facial recognition technology’s flaws in identifying women and people with dark skin highlighted.

- Several US cities banned facial recognition; debate continues due to lobbying and crime concerns.

- Law enforcement prioritized technology’s output over visual evidence, raising questions about its integration.

- ACLU Michigan involved; outcome of lawsuit uncertain, impact on law enforcement’s tech use in question.

This is ridiculous. It’s fortunate for her that she was pregnant.

One would hope that this would be used as a case study on why this technology is dangerous, especially when in the hands of obviously incompetent or just malicious actors, but I doubt that’ll happen.

The fact that she was pregnant had no bearing on the case being dropped, it only got dropped because the alleged victim didn’t appear at court. I wish her well in the lawsuit.

That’s good info; this article was pretty ambiguous about the actual case itself and made it sound like her pregnancy was the deciding factor.

It’s absolutely ridiculous that the pregnancy didn’t just invalidate the entire thing, though…

Facial recognition (and AI ) is a great tool to use as a double check.

“Hey I think we found her because her car is parked there, is that her?” AI says it’s 90% sure it’s her, sounds good let’s go.

Or entering through an airport gate after scanning your passport. Great double check.

It should never be the first line to find people out of a crowd. That’s how we slide into the dystopia

You’re right. Hopefully they will have rules like this. But knowing police, even if these rules did exist many departments would still go “Facial match? Good enough”

I think the appropriate procedure depends on the ratio of true/false positives/negatives. This is basically the same discussion that occurred with covid tests, because the mathematics behind are identical. Looking at the Positive/Negative Predictive Value should give you an idea how much you should trust each assessment.

Based on the articles we’ve seen recently, it seems that the false positives are the main problem here, so perhaps the PPV isn’t high enough. Ideally, you would combine two types of methods so that at the end of the day you’ll get to a very high PPV and NPV. However, I’m pretty sure humans have a very low NPV. Hopefully, the PPV is a lot higher, but racism clearly isn’t helping here. Augmenting that appalling mess with a flawed system is still a step forward IMO. Well, at least it would be if the system wasn’t used to oppress and discriminate people.

This is the issue with big data. Big enough database you will find a closest match that seems pretty good. False positives are a huge concern in any big data approach… and couple that with lazy policing well you get this.

This tech should be illegal outside of entertainment purposes imo. Things like Snapchat filters are fine, but using it to arrest people or advertise to people is straight up dystopian insanity.

I’m not sure it should be illegal, since it can be legitimately useful, but maybe something like “inconclusive evidence that isn’t enough to grant a warrant”. That way, you can get a list of potential suspects but you don’t end up violating rights by issuing undue warrants.

Aresting people because AI said so? Let’s fucking go. Can’t wait for the AI model that just return Yes for everything

Core utils has this AI built in: yes

Facial recognition should always be a clue, never evidence. It should have the same weight as eyewitness testimony, because the algorithms will always have personal biases from their dataset. Otherwise, we risk lawyers saying stuff like “the algorithm gives a 99% confidence this is you” and the jury thinks this is some objective measure. Meanwhile, the algorithm only has 1% BIPOC in its dataset and labels with high confidence lots of them as being the same person.

Reminds me of the movie Anon, with this jaw-dropping quote at the end: “It’s not that I have something to hide. I have nothing I want to show you.”

flaws in identifying women and people with dark skin highlighted.

Why did I read this as women using makeup to highlight their cheekbones.

Haha, if you quickly skipped the “and people” part. Happen all the time. Brain cycles are expensive.