According to the documents, Cellebrite could not unlock any iPhones running iOS 17.4 or newer as of April 2024, labeling them as “In Research.” For iOS versions 17.1 to 17.3.1, the company could unlock the iPhone XR and iPhone 11 series using their “Supersonic BF” (brute force) capability. However, iPhone 12 and newer models running these iOS versions were listed as “Coming soon.”

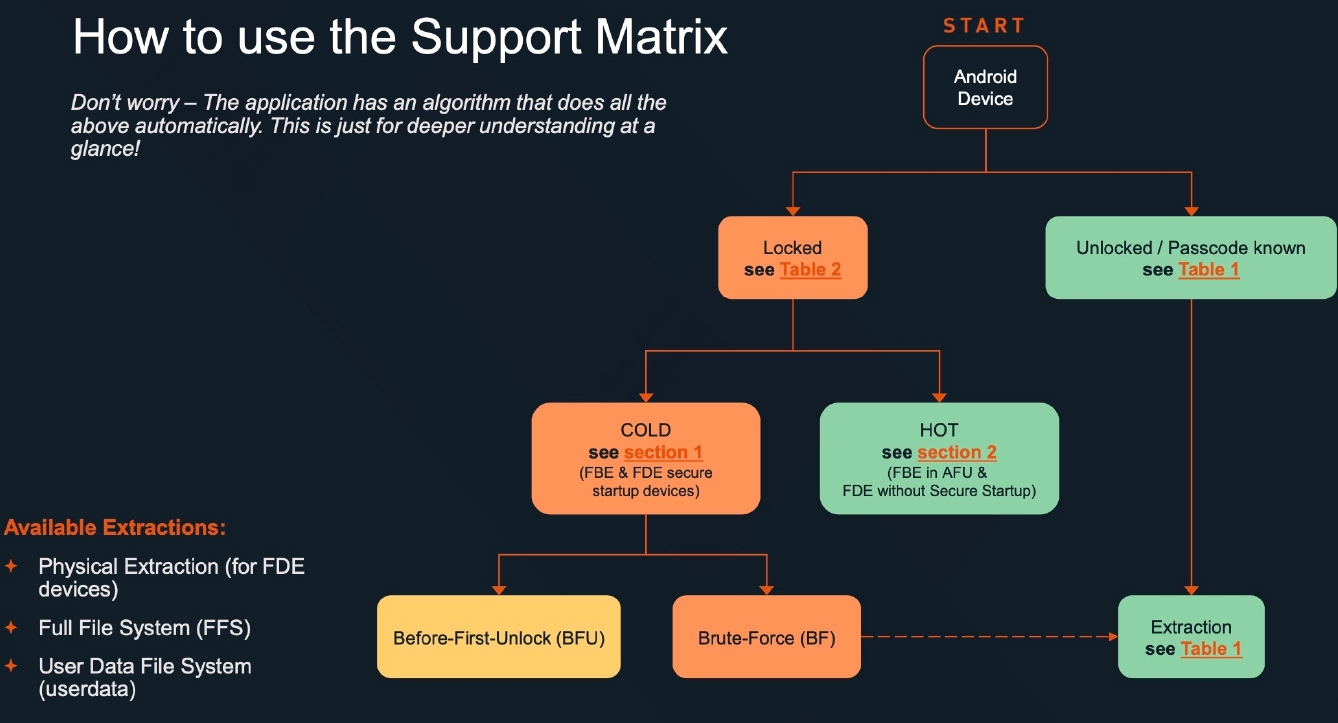

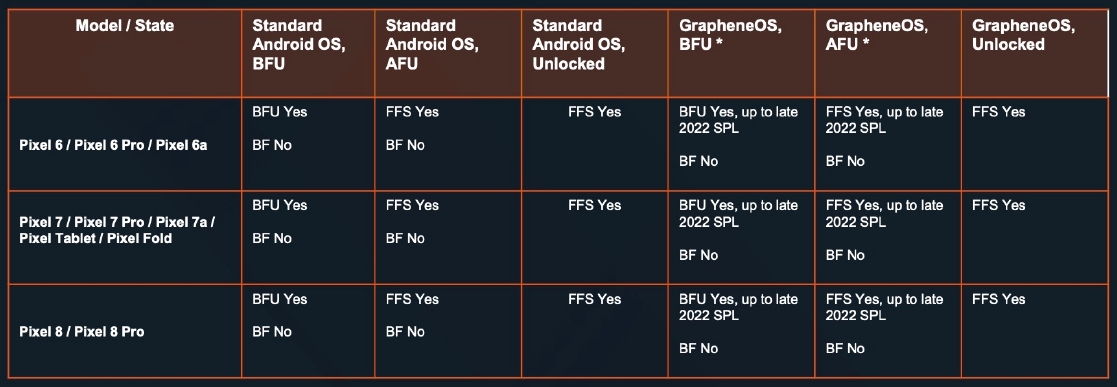

The Android support matrix showed broader coverage for locked Android devices, though some limitations remained. Notably, Cellebrite could not brute force Google Pixel 6, 7, or 8 devices that had been powered off. The document also specifically mentioned GrapheneOS, a privacy-focused Android variant reportedly gaining popularity among security-conscious users.

Links to the docs:

GrapheneOS has a thread about this on Mastodon, which adds a bit more detail:

Cellebrite was a few months behind on supporting the latest iOS versions. It’s common for them to fall a few months behind for the latest iOS and quarterly/yearly Android releases. They’ve had April, May, June and July to advance further. It’s wrong to assume it didn’t change.

404media published an article about the leaked documentation this week but it doesn’t go into depth analyzing the leaked information as we did, but it didn’t make any major errors. Many news publications are now writing highly inaccurate articles about it following that coverage.

The detailed Android table showing the same info as iPhones for Pixels wasn’t included in the article. Other news publications appear to be ignoring the leaked docs and our thread linked by 404media with more detail. They’re only paraphrasing that article and making assumptions.

We received Cellebrite’s April 2024 Android and iOS support documents in April and from another source in May before publishing it. Someone else shared those and more documents on our forum. It didn’t help us improve GrapheneOS, but it’s good to know what we’re doing is working.

It would be a lot more helpful if people leaked the current code for Cellebrite, Graykey and XRY to us. We’ll report all of the Android vulnerabilities they use whether or not they can be used against GrapheneOS. We can also make suggestions on how to fix vulnerability classes.

In April, Pixels added a reset attack mitigation feature based on our proposal ruling out the class of vulnerability being used by XRY.

In June, Pixels added support for wipe-without-reboot based on our proposal to prevent device admin app wiping bypass being used by XRY.

In Cellebrite’s docs, they show they can extract the iOS lock method from memory on an After First Unlock device after exploiting it, so the opt-in data classes for keeping data at rest when locked don’t really work. XRY used a similar issue in their now blocked Android exploit.

GrapheneOS zero-on-free features appear to stop that data from being kept around after unlock. However, it would be nice to know what’s being kept around. It’s not the password since they have to brute force so it must be the initial scrypt-derived key or one of the hashes of it.

Nice to see some benefit to updated vanilla AOSP, Graphene, and other options.

It goes without saying but it seems like a deeply fucked business model to horde zero-days that could cause billions in damage or safety issues if they fall into the wrong hands, in order to keep your mercenary surveillance product working.

Mfw Samsung on android 6 is the most secure 😮. Wonder if it has something to do with the mid boot password option that was around

Edit: Downvoted in 10 seconds wtf

Mfw Samsung on android 6 is the most secure 😮. Wonder if it has something to do with the mid boot password option that was around

More likely: So old, no point in even attempting, therefore not supported.

That’s taking “security through obscurity” to a whole new level.

I think it’s probably a demand issue. There’s just not that many devices running with that Android version, and it takes a lot of time and money to pay engineers to find and use extremely complex state of the art exploits.

So security through obscurity

Right. If you’re a sufficiently wanted criminal, I’m sure they could custom an exploit for you. You’re not really secure.

Probably not economical to include it in their normal package.

The document also specifically mentioned GrapheneOS

Okay…what’d it say? Baffling that the author didn’t think that was important…

I mean, the doc’s are available through the link. But here’s some screen grabs:

AFU: After First Unlock

BFU: Before First Unlock

BF: Brute Force

FBE: File Based Encryption

FDE: Full Device Encryption

FFS: Full File System

:(

Android user? Graphene looks like it’s worth the setup process more and more. Pixels are the only Google product worth while thanks to Graphene really.

The only docs I saw pertained to iOS.

Just like the link in the iOS section, there’s a link in the Android section. Both were in the first sentence, I believe. But I had to give the article another once over to find it.

It says it’s immune since 2023 updates

Nice, thanks

Use a long password and you’ll be immune from their before first unlock brute force.

Passcodes are trivial to break.

Incorrect. Read the links.

Edit: I missed the “first”, you’re not saying fully immune. My bad.

They’re exploiting vulnerabilities and back doors not brute forcing your passcode. The only way you’re keeping them out is with hardware encryption which the iPhone has and probably why it’s the only one not vulnerable. Hardware encryption also won’t matter if your vendor shares their keys with law enforcement. As far as I’m aware, Apple is the only one that’s gone to court and successfully defended their right to refuse access to encryption keys.

Don’t put anything incriminating on your phones.

In a before-first-unlock state they absolutely are bruteforcing, since the filesystem is encrypted. The exploits are for bypassing the retry limit in that case.

And the manufacturers don’t have your encryption keys. They’re unique.

Huh? None of this makes sense to me. Apple and Google do not have encryption keys for your device, regardless of hardware or software. When your phone is unlocked, the key and/or hash is in memory, and may be key wrapped, regardless of hardware or software. iOS is listed as vulnerable to the attacks, minus the latest devices and versions, which is pretty much the norm with lagging development. Same woth latest Pixels. Where are you getting this info from?

The Secure Enclave is a component on Apple system on chip (SoC) that is included on all recent iPhone, iPad, Apple Watch, Apple TV and HomePod devices, and on a Mac with Apple silicon as well as those with the Apple T2 Security Chip. The Secure Enclave itself follows the same principle of design as the SoC does, containing its own discrete boot ROM and AES engine. The Secure Enclave also provides the foundation for the secure generation and storage of the keys necessary for encrypting data at rest, and it protects and evaluates the biometric data for Face ID and Touch ID.

https://support.apple.com/guide/security/hardware-security-overview-secf020d1074/web

The FBI wanted access to Apple’s encryption keys which they use to sign their software. They don’t have ‘your’ encryption keys, they have their own that the FBI wanted to use to bypass these features. They eventually dropped it because they found a zero day exploit which apple fixed in later versions. That is why the newer phones aren’t vulnerable (yet).

Familiar, but based on your first comment about the benefits of hardware encryption over software encryption, and thus iOS being better than Android, perhaps you’re misinterpretting the specifics?

For the first point, the SE only stores keys at rest. The keys and hashes are still in memory when booted, otherwise the device wouldn’t be able to function. This works the same as software encryption, the key itself is just encrypted and stored on “disk” instead of in flash when off.

For the second, Apple’s software signing keys would not give the FBI access to a device. There is nothing to “turn over”. The signing of new software to bypass the lock was to remove the 10 retry reset. As above, there is no benefit to hardware encryption over software here.

The benefit hardware encryption brings is potential speed (which is certainly valuable, but not necessarily more secure or harder to crack).

I’m not claiming iPhones are superior. I don’t care about dumb OS wars, just don’t put things on your phone expecting that they can’t be retrieved. That’s the only point I’m trying to make here.

And the keys absolutely would give them access since those keys are used to sign Apple software which runs with enough privileges to access the encryption keys stored in the “Secure Enclave”. Anything you entrust to a company’s software is only as secure as the company wants to make it, and the only company to publicly resist granting that acces is Apple (so far)

The only way you’re keeping them out is with hardware encryption

This was the hardware vs software comment I was debating, not the rest.

Also, software signing keys (like those requested by the FBI) would work for enabling brute force since that’s a change to the software, but not for direct access into SE. That would be like saying a firmware update could grant access to a LUKS partition without the passphrase. Not possible. If it was, no open source encryption would ever work.

The only thing that has successfully managed to thwart the FBI in their attempts to break into a phone was Apple’s hardware based encryption. To such an extent that they took legal and legislative actions to try and circumvent it. The specifics of how the encryption works is irrelevant to this argument, and you are more than welcome to consider that point conceded.