All in all pretty decent sorry I attached a 35 min video but didn’t wanna link to twitter and wanted to comment on this…pretty cool tho not a huge fan of mark but I prefer this over what the rest are doing…

The open source AI model that you can fine-tune, distill and deploy anywhere. It is available in 8B, 70B and 405B versions.

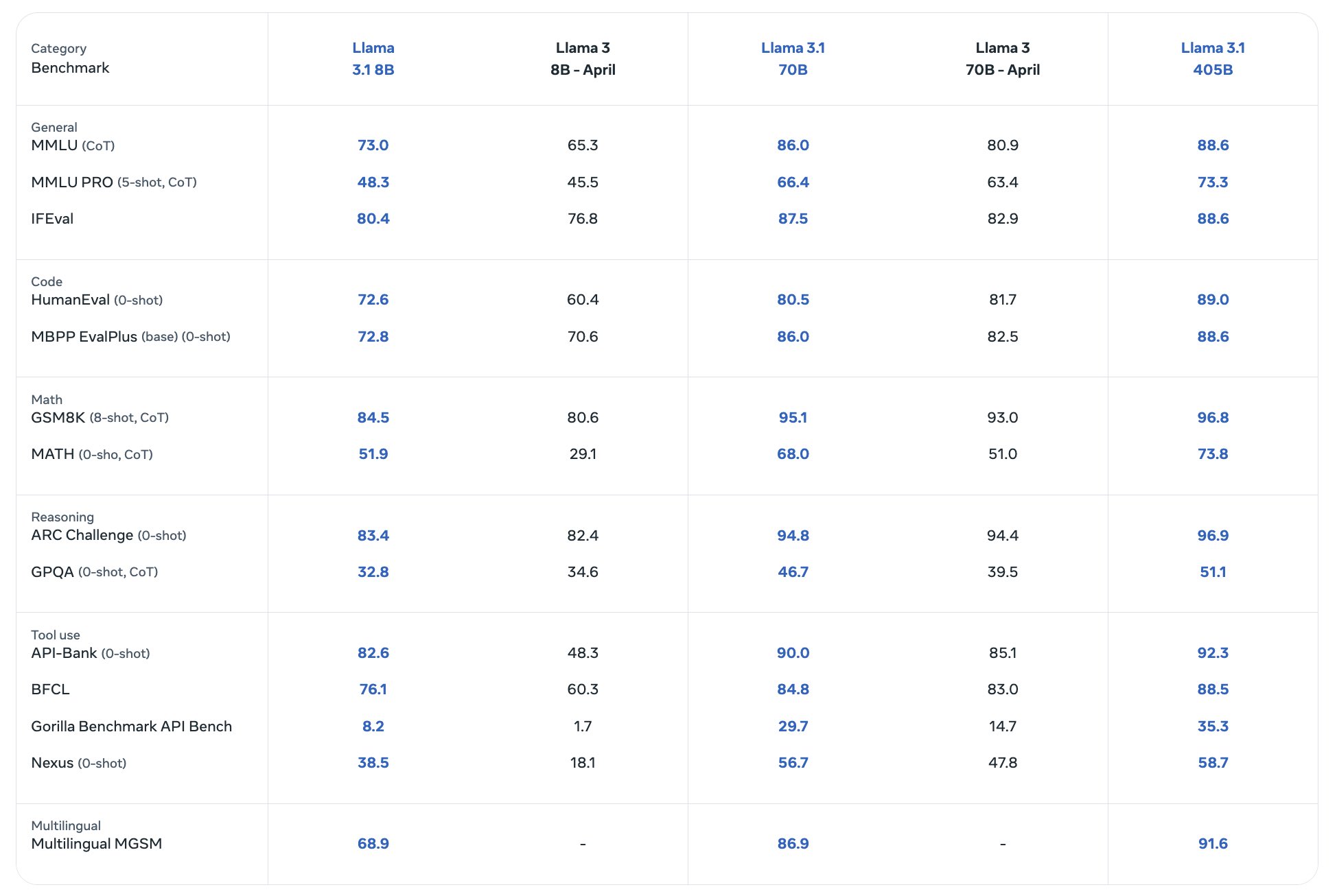

Benchmarks

The Llama licence isn’t open source because of the restrictions it has.

What are the restrictions?

Are there any open source models people would normally use?

https://opensource.org/blog/metas-llama-2-license-is-not-open-source

The actual licence is here: https://ai.meta.com/llama/license/

iv. Your use of the Llama Materials must comply with applicable laws and regulations (including trade compliance laws and regulations) and adhere to the Acceptable Use Policy for the Llama Materials (available at https://ai.meta.com/llama/use-policy), which is hereby incorporated by reference into this Agreement.

v. You will not use the Llama Materials or any output or results of the Llama Materials to improve any other large language model (excluding Llama 2 or derivative works thereof).

- Additional Commercial Terms. If, on the Llama 2 version release date, the monthly active users of the products or services made available by or for Licensee, or Licensee’s affiliates, is greater than 700 million monthly active users in the preceding calendar month, you must request a license from Meta, which Meta may grant to you in its sole discretion, and you are not authorized to exercise any of the rights under this Agreement unless or until Meta otherwise expressly grants you such rights.

Thank you. Very informative.

So, do not train other LLMs with it.

Do not use it in hugely successful global products.

The issue is that “open source” is a term for computer software. And it doesn’t really apply to other things. But people use it regardless. With software, it means you share the recipe, the program code. With machine learning models, there isn’t really such a thing. It’s a pile of numbers (the weights) that are the important thing. They get shared in this case. But you can’t reproduce them. For that you’d need the dataset that went in (which Meta doesn’t share because lots of that is copyrighted and they have several court cases running because they just stole the texts and said it’s alright.) But what open source allows (amongst other things) is to build upon things and modify them. And that can be done with the models to a certain degree. They can be fine-tuned and incorporated in custom projects. In the end they (Meta) want to frame things a certain way and be the good guys. But the term still doesn’t really mean what it’s supposed to mean.

There are other models with other licenses. There are Apache-licensed models available. There are models which do or don’t allow for commercial usage. We also have some with the datasets and everything available. But at least those aren’t state of the art anymore.

Thanks. I know all this; I was just lazy and continued to use the terminology used in the post or thread.

So, what are the restrictions? That you can’t use them commercially, for example?

And if an average Joe wants to re-create a “for personal use” Jarvis, what would they use today?

I haven’t looked it up. If it’s the same as before there aren’t that many restrictions. You can’t use it commercially if you have like more than 700 million users. So basically if you’re Google, Amazon or OpenAI. Everyone else is allowed to use it commercially. They have some stupid rules how you have to call your derivatives. And they’re not liable. You can read the license if you’re interested.

What people would use? They’d use exactly this. Probably one of the smaller variants. This is state of the art. Or you’d use some of the competing models from a few weeks or months back. Mistral, … You’d certainly not train anything from ground up. Because it costs millions of dollars in electricity and hardware (or cost for renting hardware).

Thank you!

Yeah more or less open source to these guys is just like saying they didn’t close out any parts of the code…which they didn’t but beyond that I agree with you totally.

What do 8B, 70B, and 405B refer to?

Parameter count. 8 billion … Colloquially the model size, and hence how smart it is. 405 billion parameters is big. We didn’t have anything even close to that size and with current technology to download and tinker around, until just now.

I mean, from what I can tell we still don’t, at least as home users. The full size model won’t fit on any commercial hardware. Even with a top of the line 4090 GPU you’re limited to the 8B model if you want to run it offline, and that still charts lower than the last-gen 70B model.

Still cool to have it be available, though.

The full size model barely runs on 160 GB VRAM and something like 200 GB CPU buffer. I’m trying to scale it across many GPUs but haven’t had much luck yet.

Pretty sure a good chunk of people are actually running 70B models tho

There are ways to bring the models down in size at the cost of accuracy and I believe you can trade off performance to split them across the GPU and the CPU.

Honestly, the times I’ve tried the biggest things out there out of curiosity it was a fun experiment but not a practical application, unless you are in urgent need of a weirdly taciturn space heater for some reason.

Yeah, I prefer to use EXL2 models. GGUF models split across GPU and CPU are slow af, I tried that too. But I’ve seen mutliple people on Reddit claim that they run 70B models on cards like 4090s.

Yeah, the smaller alternatives start at 14 GB, so they do fit in the 24 GB of the 4090, but I think that’s all heavily quantized, plus it still runs like ass.

Whatever, this is all just hobbyist curiosity stuff. My experience is that running these raw locally is not very useful in any case. People underestimate how heavy the commercial options are, how much additional work goes into them beyond the model, or both.

The low quant versions of a 70B model are still way better than a high quant version of an 8B model tho. But yeah, performance might be ass, I don’t have anything like a 4090, so I couldn’t tell you. The main thing I do with these locally run models is use it for SillyTavern, which lets you kinda do roleplay with fictional characters. That’s kinda fun sometimes. But I don’t really use it much besides that either. Just testing how well different models perform and what I can run on my GPU is kinda fun in itself too tho.

Sure. It’s big. I think they linked some cloud services where you can run it. Like HuggingFace(?), Azure, Amazon, … And we have some services available like runpod.io where you also can rent a Linux machine by the minute. With several datacenter NVidia cards with 80GB VRAM each.

It won’t run on a normal high-end gaming PC at that size. I think a Mac Studio with lots of RAM can do it. Or you’d need to buy several of the very expensive NVidia cards. But I think that’s exactly why they gave us the other variants with less parameters.

I’m happy that they released it anyways. Before that it was just a game for the big players and nobody could participate. Now we have it and no one can take it away. It is certainly possible to run it. Albeit not easy to run at home. But it’s like that with lots of things in life. Sometimes the professional tools or expensive infrastructure aren’t affordable for private people. But we can share and rent such things.

What is the parameter count for the famous proprietary models like gpt 4o and claude 3.5 sonnet?

They don’t tell. There is lots of speculation out there. In the end I’m not sure if it’s a good metric anyways. Progress is fast. A big model from last year is likely to be outperformed by a smaller model from this year. They have different architecture, too. So that count alone doesn’t tell you which one is smarter. A proper benchmark would be to compare the quality of the generated output, if you’re interested to learn which one’s the smartest. But that’s not easy.

I am not really concerned with which one is better or smarter but with which one is more resource intensive. There is a lot of opacity about the cost in a holistic sense. For example, a recent mini model from OpenAI is the cheapest smart (whatever that may mean) model available right now. I wanna know if the low cost is a product of selling on a loss or low profit margin, or of an abundance of VC money and things like that.

Well, I don’t know if OpenAI does transparency and financial reports. They’re not traded at the stock exchange so they’re probably not forced to tell anyone if they offer something at profit or at a loss. And ChatGPT 4o mini could be way bigger than a Llama 8B. So automatically also more resource intensive… Well… it depends on how efficient the inference is. I suppose there’s also some economy of scale.

Number of training parameters. 8B indicates 8 (B)illion parameters.

https://www.thecloudgirl.dev/blog/llm-parameters-explained

405B for an opensource model is insane btw.

From the benchmarks it seems like it’s actually a noticable improvement over Llama 3. Llama 3 was already a lot better than Llama 2 (from actually using it, not just benchmarks), so I’m really interested in how good this actually is in practice.

Did the zuckerbot undergo some sort of fuckboi exterior upgrade?

I think they crossed him with a Llama

Haha well at least under the hood he seems semi normal I remember videos of him past few years with a stern look on his face talking like a robot were so cringe… But ya

Never mind that, what the hell is up with his face? Why does he look like a negative image of someone who hasn’t slept in months? Did he get replaced by his ginger cousin? Is he being played by Jesse Eisenberg again, just with a deepfake filter?

He’s been playing in the sun with sunglasses on.

So I guess we’re never getting that 29-32B model