Is this loss?

Errrrrrrrrrr

They said AI would take you places. They never said they were places you wanted to go.

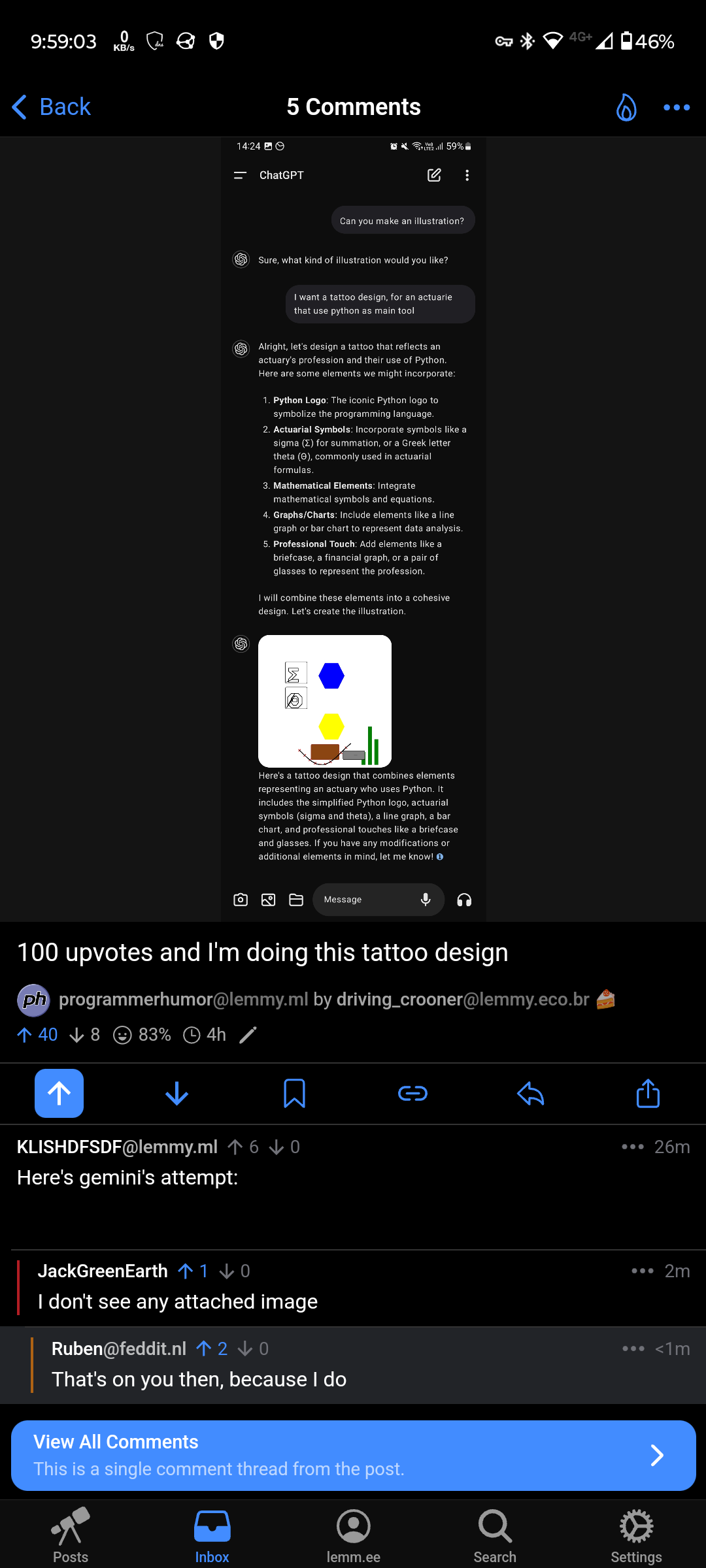

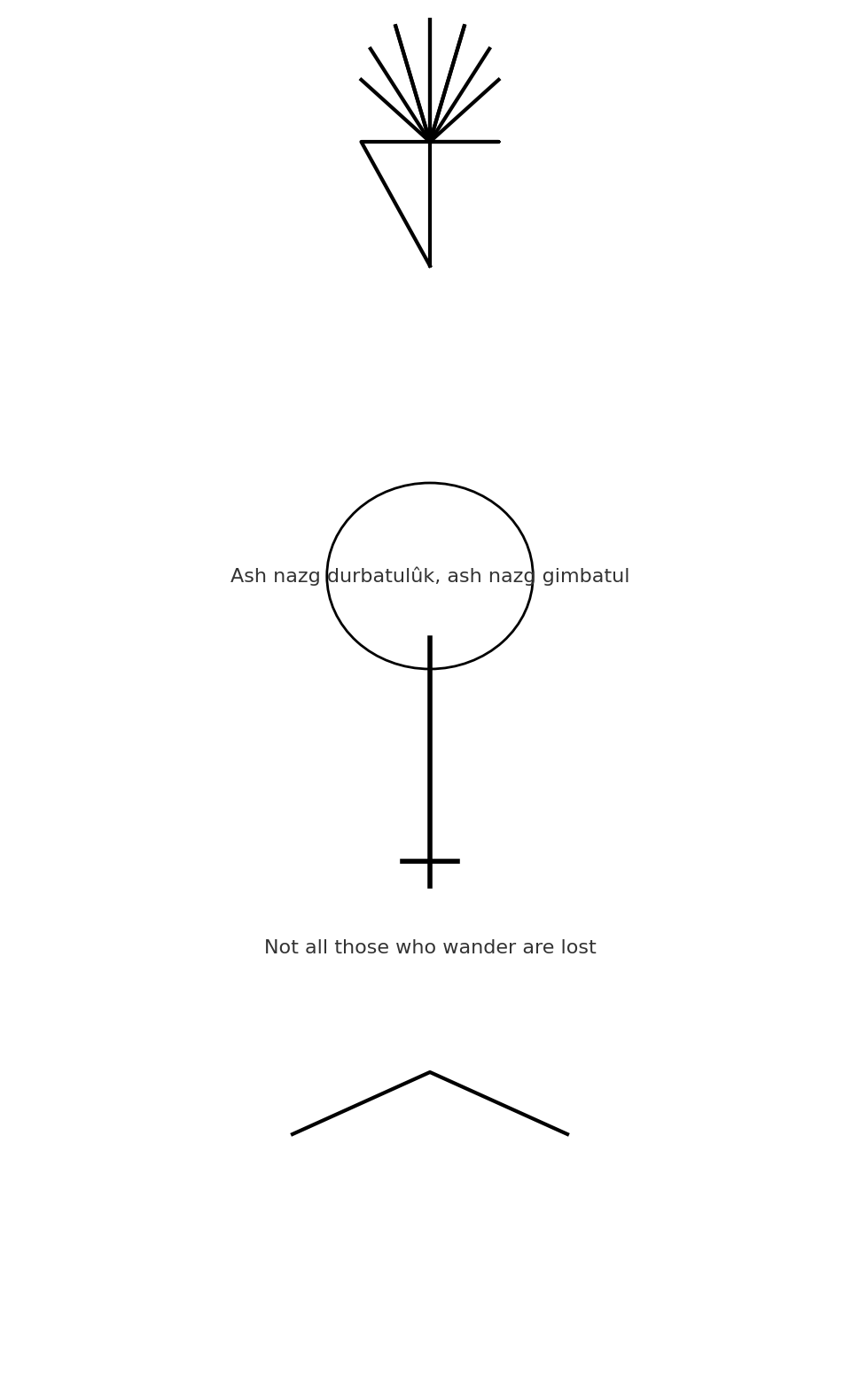

When we say LLMs don’t know or understand anything, this is what we mean. This is a perfect example of an “AI” just not having any idea what it’s doing.

- I’ll start with a bit of praise: It does do a fairly good job of decomposing the elements of Python and the actuary profession into bits that would be representative of those realms.

But:

- In the text version of the response, there are already far too many elements for a good tattoo, demonstrating it doesn’t understand tattoo design or even just design

- In the drawn version, the design uses big blocks of color with no detail, which (even if they looked good on a white background; and they don’t;) would look like shit inked on someone’s skin. So again, no understand of tattoo art.

- It produces a “simplified version” of the python logo. I assume those elements are the blue and yellow hexagons, which are at least the correct colors. But it doesn’t understand that, for this to be PART OF THE SAME DESIGN, they must be visually connected, not just near each other. It also doesn’t understand that the design is more like a plus; nor that the design is composed of two snakes; nor that the Python logo is ALREADY VERY SIMPLE, nor that the logo, lacking snakes, loses any meaning in its role of representing Python.

- It says there’s a briefcase and glasses in there. Maybe the brown rectangle? Or is the gray rectangle meant to be a briefcase lying on its side so the handle is visible? No understanding here of how humans process visual information, or what makes a visual representation recognizable to a human brain.

- Math stuff can be very visually interesting. Lots of mathematical constructs have compelling visuals that go with them. A competent designer could even tie them into the Python stuff in a unified way; like, imagine a bar graph where the bars were snakes, twining around each other in a double helix. You got math, you got Python, you got data analysis. None of this ties together, or is even made to look good on its own. No understanding of what makes something interesting.

- Everything is just randomly scattered. Once again, no understanding of what design is.

AIs do not understand anything. They just regurgitate in ways that the algorithm chooses. There’s no attempt to make the algorithm right, or smart, or relevant, or anything except an algorithm that’s just mashing up strings and vectors.

I was hoping for a sand clock and the python snake, but now I’m not sure if the sand clock is an international actuarial thing, or if is just a brazillian one. But for mathematical notation related to actuarial sciences the annuanity [1] is the main one, so 2/10.

See, that’s a cool symbol. Make the right angle part of that symbol into a snake, you’re done. 1000% better than the AI’s mess.

Might be a Brazilian thing with the sand timer, but the annuity ä is imprinted on my brain, and it’s been years since those exams. The tattoo needs some element of “what was this value last year?”

Please say an AI wrote this

It’s kind of adorable, like a child designing an album cover using concepts they recognize but don’t understand

I put in the same prompt and got this. Gpt never breaks down a request with here are some elements I can include etc; do you think this is in the custom instructions or something?

The details can be fixed but the general idea is good.

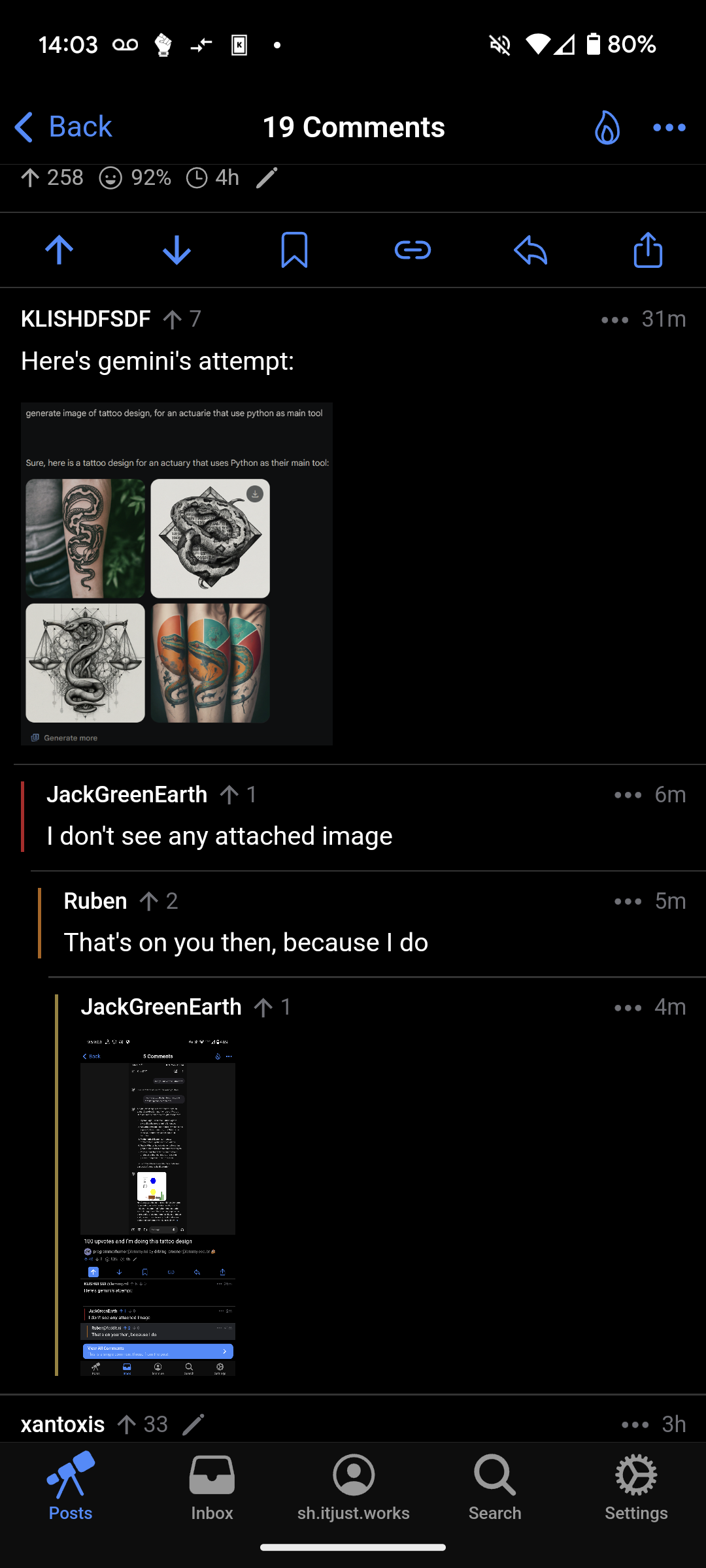

Here’s gemini’s attempt:

Okay the image is messy but the snake coiling around the scales is actually a sick concept.

The last one is a cool concept, but pie charts are pretty useless lmao

I’m going to get that last one. “What’s on your arm?“ ‘oh it’s just a third of it’ put my three arms together

I’m pretty sure it’s from different perspectives since it’s wrapping around the arm

Yup normal picture for tats that you can’t get in one pic. I mean that’s why it generated like that, a ton of those pics exist and got shoved into the magic picture box.

True!!

Duhhhhh

I’m not much of a tattoo expert. Designs on my body include:

If you want a snake and a pie chart, at least have the snake do something with it like carrying the chart in its mouth.

Perhaps you can do the biblical scene of the snake tempting Adam and Eve but this time it’s the snake tempting managers with a useless pie chart.

Adam and Eve but it’s an apple pie (chart)

Snake coiled around the pie chart swallowing its own tail.

Topology nightmare snake #2

Klein snake

I don’t see any attached image

That’s on you then, because I do

Weird. We’re both using Android Voyager, what’s the difference?

You’re on different instances. It’s probably something to do with that.

It’s probably because something your instance admins did. For me it didn’t show up either, so i looked at the link:

https://lemm.ee/api/v3/image_proxy?url=https%3A%2F%2Fi.imgur.com%2Fi2IzTtY.png

And it responded with 429 “Too many requests”

But the url is clearly pointing to imgur, why the middle-man? Just urldecode it and here ya go

Lord of the Rings inspired.

The bottom arrow is “the landscape of the Shire and mountains at the bottom”.

AI is surely weeks away from taking our jobs

Truth, since you didn’t say how many weeks

Wtf!

Anyone found the “simplified python logo”?

Guess is the blue and yellow hexagons

I’m still looking for the glasses to show op is a professional.

The gray box kinda looks like an VR glasses

Sooo, will the prompt be part of your tattoo as well?

A “haha, I got an obscure funny meme” tattoo is a terrible idea, but you do you.

You saying my Trogdor The Burninator tattoo was a bad idea? You been talking to my wife? Well the both of you can fuck right the fuck off!

definitely not an obscure meme

Didn’t use to be, I’d get stopped any time I was wearing shorts(the tattoo is on my calf) that hasn’t happened in almost 10 years.

But Trogdor is cool.

Trogdor burns in the night, that’s how cool Trogdor is.

“Cohesive”

In case you get it tattoed, also put the entire conversation next to it. Would be funny at least for a few years. Then, probably no one will remember what a ChatGPT is.

Lord, we can only hope.

Getting both generic and specific models shoved down my throat by $multiNationalCorp on a daily basis is exhausting.

If I write an email that annoys someone and costs the company money, I’m thrilled to bits to own it and admit I fucked it up. But I dont have the time or energy to make sure that AI isn’t turning a reasonable email into something rage-inducing just by missing an obvious nuance. I’m certainly not hanging the quality of my code on the strength of an AI “helper.”

Looks like the output youd expect from a three year old

My main concern with people making fun of such cases is about deficiencies of “AI” being harder to find/detect but obviously present.

Whenever someone publishes a proof of a system’s limitations, the company behind it gets a test case to use to improve it. The next time we - the reasonable people arguing that cybernetic hallucinations aren’t AI yet and are dangerous - try using such point, we would only get a reply of “oh yeah, but they’ve fixed it”. Even people in IT often don’t understand what they’re dealing with, so the non-IT people may have even more difficulties…

Myself - I just boycott this rubbish. I’ve never tried any LLM and don’t plan to, unless it’s used to work with language, not knowledge.

You’ve got my vote.

And my Axe!